|

||||

|

|

|||

|

||||

|

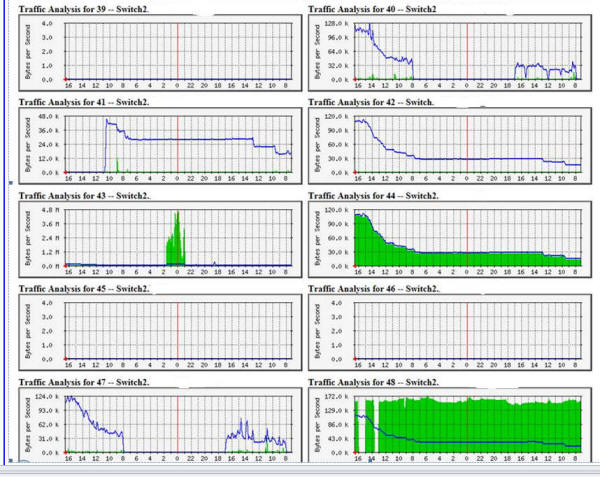

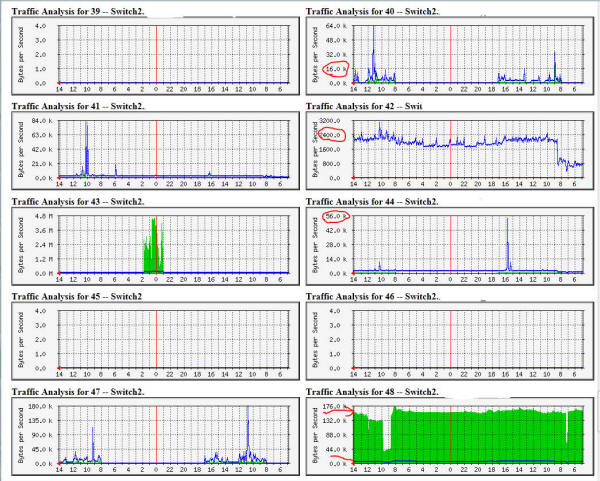

We're baaaaa-aaaack! This set of graphs is from Wednesday afternoon going back to Tuesday morning. You can see a relatively stable traffic pattern on most ports. I'm still not happy with how much traffic is hitting how many ports, it is way better than Monday. Then Wednesday around 8:00 AM the traffic starts ramping up up up on every port that has a link on it: |

||||

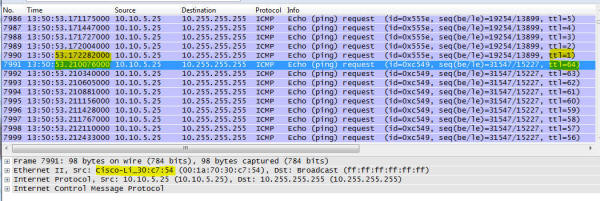

| So something is still wrong... very wrong. The dead elephant, reanimated! Remotely connecting up and running a sniff on one of the servers:  And get who is back from the dead? Yes, that Cisco / Linksys Wireless Access Point (WAP, specifically a model WAP 200) is doing broadcast pings again. Look at the time between successive pings (the time between TTL=1 and TTL=64), and also the gap between successive pings. Suffice to say, here it is Wednesday and the door system is going offline again. I looked around and didn't see tons of multicasting, so the theory that the WAP was somehow reacting to those multicast packets is dead. It must be something about that WAP. Some research on the WAP 200 showed it was running firmware 1.0.14. Next stop is to check with Linksys support to see if there is a newer release and if this was a known problem that actually was documented. I say that as not all companies are transparent with what the bugs are that get fixed with different releases - just look at most iPhone apps to see the very generic "bug fixes" for many updates. I expect more from Cisco, and sure enough I am not disappointed. From the document here that discusses firmware release 2.0.6:

Resolved Caveats in Release 1.0.20

•

PING floods are no longer observed when multiple access points Well now lets do a little math here... I left on Monday around Noon, and here we are Wednesday 1:50 PM and the ping traffic from this device is hammering everything on this network as fast as it possibly can. When the WAP was in this state, we couldn't do anything to it. So that was unplugged, setup on the bench, firmware upgraded, reconfigured, and redeployed. And here is the traffic two weeks later, same ports as shown above:  You have to look at the scales to see how much quieter those ports are. Port 40 was solid up over 100K, now occasionally touches 16K with one peak up at 64K. Ditto for the other ports. Port 48 is the source of the multicast data, thus the solid green - it is still arriving, but now that it only goes where it needs to go the entire network is a much happier place. But all that just means network traffic is way down. Useless traffic. The real indicator is that door system - it hasn't gone offline once! Which gave me a thought - given the sensitivity of the door system to traffic and the fact it is only a 10 Mb/s link, we can think of the door system as a canary in the coal mine - an early indicator that something is wrong with traffic flow on the network. Monsters under the bed There are likely still some lurking issues:

Months later, no other problems reappeared - so this case is closed! Final Words... If you found this helpful or not, please send me a brief email -- one

line will more than do. If I saved you a bunch of time (and thus $$),

and you wanted to show appreciation, sending a little love via PayPal or

an Amazon gift card is also very much appreciated!

|

||||